[LLM] Langchain

Langchain은 에이전트 개발을 위한 주요 오픈소스 프레임워크 중 하나다.

Langchain은 에이전트 개발을 위한 주요 오픈소스 프레임워크 중 하나다.

LLM 기반의 에이전트 시스템을 좀 더 편리하게 만들 수 있게 도와준다.

그리고 현재 시점에서 정식으로 지원되는 언어는 Python, Typescript 뿐이다.

LLM 모델은 어지간한건 다 붙일 수 있다. Gemini, OpenAI, Anthropic 등 주요 모델들은 다 통합 드라이버를 제공한다.

Langchain vs Langgraph

Langchain을 언급하면 항상 쌍으로 나오는 것이 Langgraph다.

비교하자면 Langchain은 비교적 단순한 사용사례를 위해 제공되는 간단한 구조의 프레임워크고, Langgraph는 그래프 형태로 에이전트를 엮어서 높은 수준의 상호작용을 구현할 수 있게 해주는 복잡한 프레임워크다.

본인이 느끼기에 그렇게 복잡한 시스템이 아니라면 Langchain으로 먼저 시작해보고, 부족함을 느끼면 옮겨도 될 것 같다.

그리고 이거 둘 다 같은 오픈소스 그룹에서 만든 것이다.

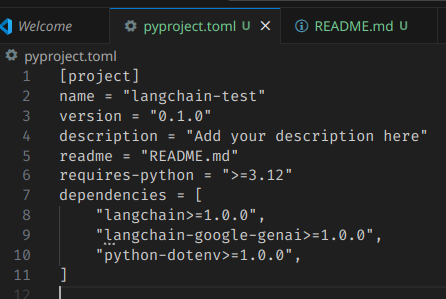

설치 (with Gemini)

gemini를 쓴다고 가정하고 한번 돌려보겠다.

다음과 같이 langchain과, 사용할 LLM에 대한 전용 드라이버를 동시에 설치하면 된다.

uv add langchain langchain-google-genai 종속성 구조가 복잡하진 않다.

종속성 구조가 복잡하진 않다.

기본 사용법

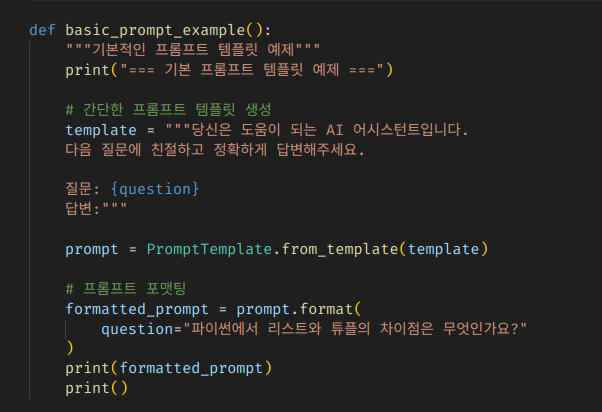

에이전트 프레임워크니 뭐니 하지만, 기저에 깔려있는 기반구조 자체는 매우 단순하다.

결국은 템플릿 문자열 생성기에 불과하기 때문이다.

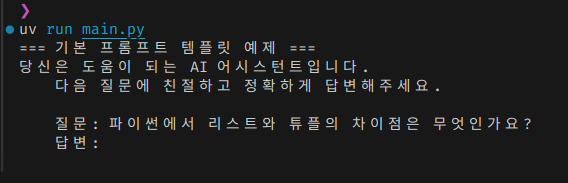

다음 코드는 LLM 호출 없이 템플릿 기반으로 프롬프트를 생성하는 간단한 예제다.

from dotenv import load_dotenv

from langchain_core.prompts import PromptTemplate

# 환경변수 로드

load_dotenv()

def basic_prompt_example():

"""기본적인 프롬프트 템플릿 예제"""

print("=== 기본 프롬프트 템플릿 예제 ===")

# 간단한 프롬프트 템플릿 생성

template = """당신은 도움이 되는 AI 어시스턴트입니다.

다음 질문에 친절하고 정확하게 답변해주세요.

질문: {question}

답변:"""

prompt = PromptTemplate.from_template(template)

# 프롬프트 포맷팅

formatted_prompt = prompt.format(

question="파이썬에서 리스트와 튜플의 차이점은 무엇인가요?"

)

print(formatted_prompt)

print()

<br>

def main():

"""메인 함수"""

basic_prompt_example()

<br>

if __name__ == "__main__":

main()

그냥 이런 식으로 구멍 뚫어놓은 프롬프트 템플릿을 만들어뒀다가, 사용할때 주입해서 사용하는 것이다.

그냥 이런 식으로 구멍 뚫어놓은 프롬프트 템플릿을 만들어뒀다가, 사용할때 주입해서 사용하는 것이다.

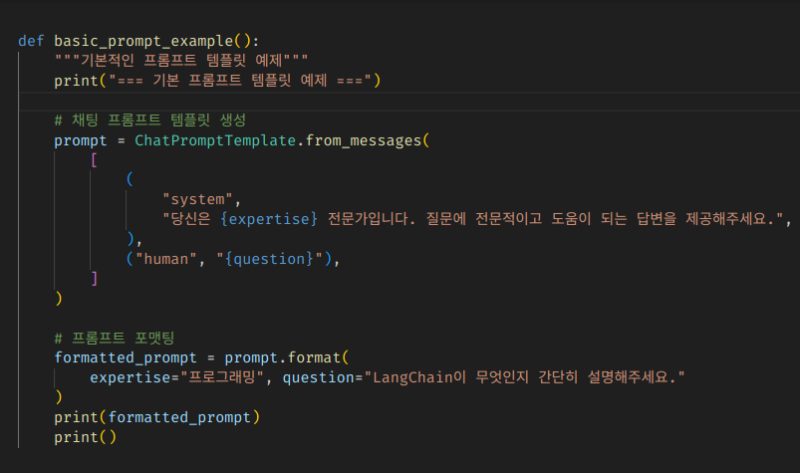

조금 더 가보자.

채팅을 고려해서 프롬프트를 작성하게 되면, 우리가 원하는 지시문과 사용자의 텍스트를 나열해서 전달해야 할 경우가 제법 많다.

그럴때는 ChatPromptTemplate를 사용해서 튜플의 배열 형태로 메세지를 넘겨줄 수 있다.

from dotenv import load_dotenv

from langchain_core.prompts import ChatPromptTemplate

# 환경변수 로드

load_dotenv()

<br>

def basic_prompt_example():

"""기본적인 프롬프트 템플릿 예제"""

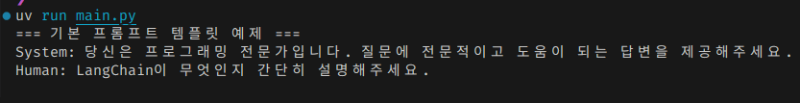

print("=== 기본 프롬프트 템플릿 예제 ===")

# 채팅 프롬프트 템플릿 생성

prompt = ChatPromptTemplate.from_messages(

[

(

"system",

"당신은 {expertise} 전문가입니다. 질문에 전문적이고 도움이 되는 답변을 제공해주세요.",

),

("human", "{question}"),

]

)

# 프롬프트 포맷팅

formatted_prompt = prompt.format(

expertise="프로그래밍", question="LangChain이 무엇인지 간단히 설명해주세요."

)

print(formatted_prompt)

print()

<br>

def main():

"""메인 함수"""

basic_prompt_example()

<br>

if __name__ == "__main__":

main()

그럼 이런 식으로 적당히, 행 단위로 구분하고 콜론으로 헤더:값 쌍을 집어넣어서 생성해준다.

그럼 이런 식으로 적당히, 행 단위로 구분하고 콜론으로 헤더:값 쌍을 집어넣어서 생성해준다.

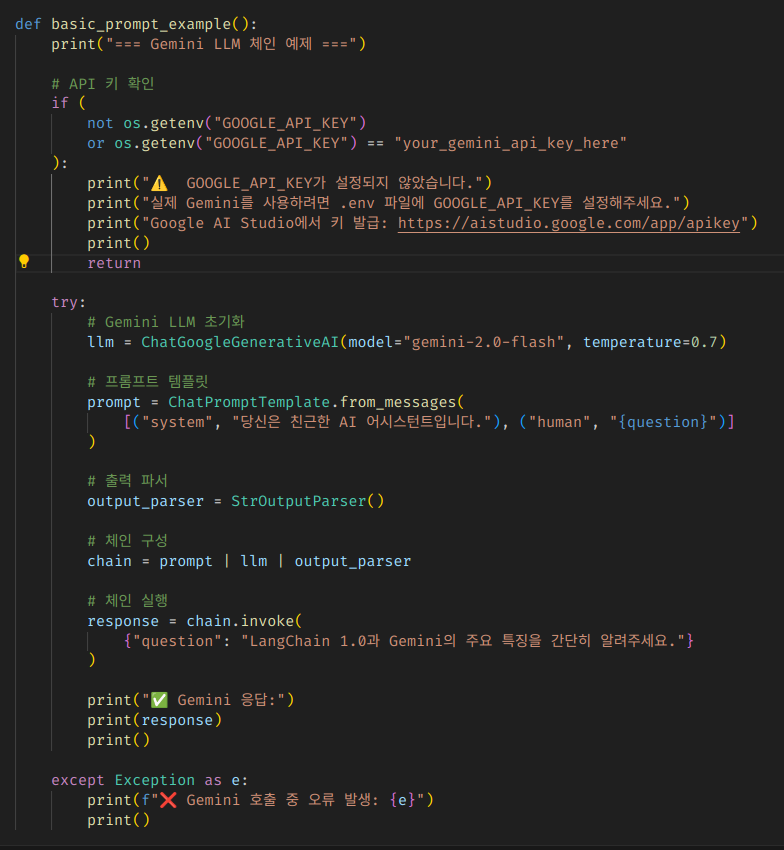

이번에는 진짜로 LLM까지 붙여서 프롬프트를 날려보자.

환경변수에 Gemini Key를 넣어둬야 한다.

from dotenv import load_dotenv

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

from langchain_google_genai import ChatGoogleGenerativeAI

import os

# 환경변수 로드

load_dotenv()

<br>

def basic_prompt_example():

print("=== Gemini LLM 체인 예제 ===")

# API 키 확인

if (

not os.getenv("GOOGLE_API_KEY")

or os.getenv("GOOGLE_API_KEY") == "your_gemini_api_key_here"

):

print("⚠️ GOOGLE_API_KEY가 설정되지 않았습니다.")

print("실제 Gemini를 사용하려면 .env 파일에 GOOGLE_API_KEY를 설정해주세요.")

print("Google AI Studio에서 키 발급: https://aistudio.google.com/app/apikey")

print()

return

try:

# Gemini LLM 초기화

llm = ChatGoogleGenerativeAI(model="gemini-2.0-flash", temperature=0.7)

# 프롬프트 템플릿

prompt = ChatPromptTemplate.from_messages(

[("system", "당신은 친근한 AI 어시스턴트입니다."), ("human", "{question}")]

)

# 출력 파서

output_parser = StrOutputParser()

# 체인 구성

chain = prompt | llm | output_parser

# 체인 실행

response = chain.invoke(

{"question": "LangChain 1.0과 Gemini의 주요 특징을 간단히 알려주세요."}

)

print("✅ Gemini 응답:")

print(response)

print()

except Exception as e:

print(f"❌ Gemini 호출 중 오류 발생: {e}")

print()

<br>

def main():

"""메인 함수"""

basic_prompt_example()

<br>

if __name__ == "__main__":

main()

여기서 좀 흥미로운 부분은, 체인 구문으로 호출 과정을 정의한다는 것이다.

프롬프트를 llm에 보내고, 그 응답을 파싱하는 과정을 또 자기들만의 커스텀 구문을 정의해서 만들어놨다.

프롬프트를 llm에 보내고, 그 응답을 파싱하는 과정을 또 자기들만의 커스텀 구문을 정의해서 만들어놨다.

그리고 invoke만 하면 저 파이프라인 순서대로 실행해서 결과를 내뱉어주는 것이다.

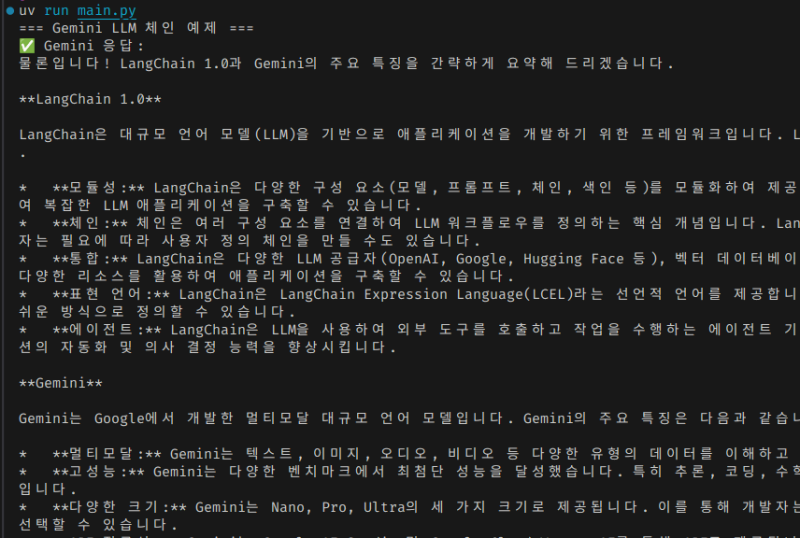

잘 동작했다.

잘 동작했다.

멀티 에이전트 구현

langchain 자체는 멀티에이전트 구조를 상정하고 만들어지지는 않았다.

직접 그 멀티에이전트 워크플로를 구현해서 붙이면 당연히 만들 수는 있는데, 그걸 직접 지원해주진 않는다.

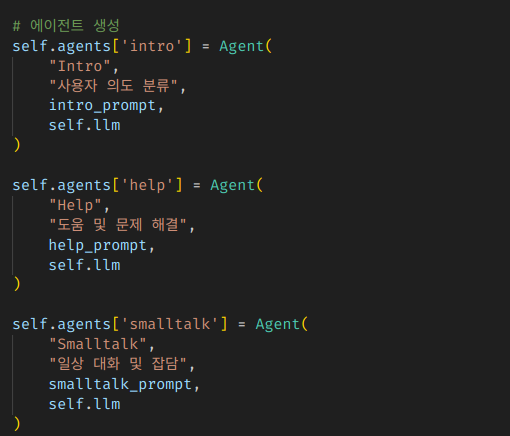

langchain만 사용해서 멀티에이전트 구조를 잡으려면, 이런 식으로 직접 다 구조를 잡아야 한다.

에이전트들 초기화하고

에이전트들 초기화하고

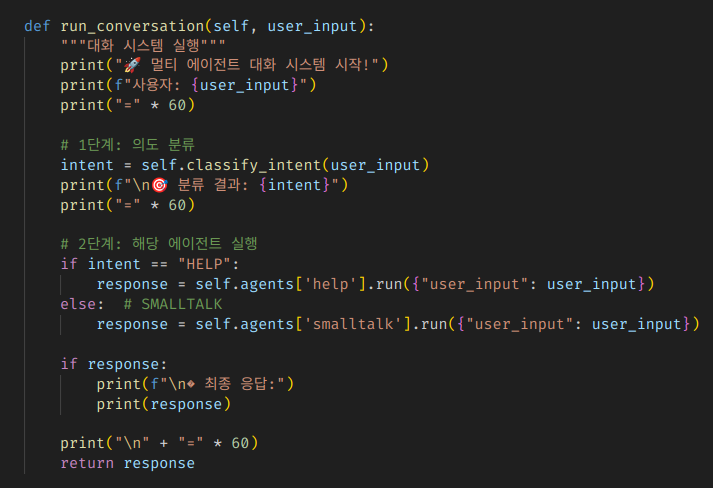

에이전트 결과에 따른 분기 및 후속조치들을 다 한땀한땀 짜야 한다.

에이전트 결과에 따른 분기 및 후속조치들을 다 한땀한땀 짜야 한다.

이런걸 구조화해서 제공하는 것이 langgraph다.

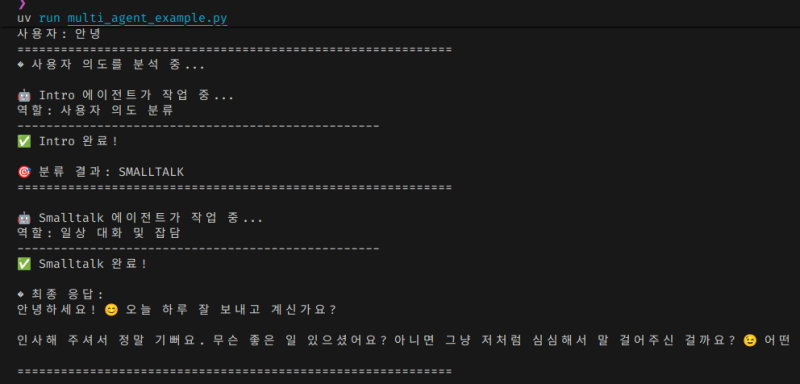

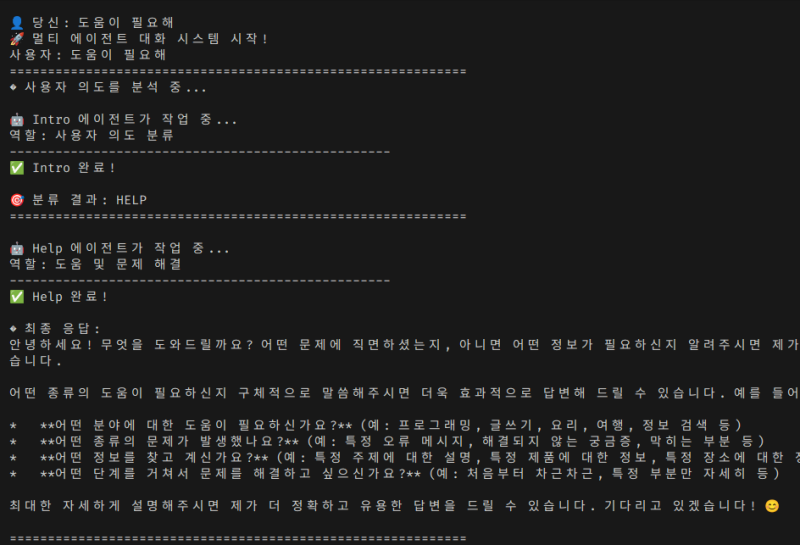

잘 동작하긴 한다.

잘 동작하긴 한다.

예제코드 전체

"""

LangChain 기본 기능만으로 구현한 의도 분류 기반 멀티 에이전트 예제

이 예제는 다음과 같은 구조로 되어 있습니다:

1. Intro 에이전트: 사용자 입력의 의도를 분류 (HELP vs SMALLTALK)

2. Help 에이전트: 도움/질문/문제해결이 필요한 경우 전문적인 답변 제공

3. Smalltalk 에이전트: 일상대화/인사/잡담에 친근하게 응답

각 에이전트는 고유한 역할과 프롬프트를 가지고,

Intro 에이전트의 분류 결과에 따라 적절한 전문 에이전트가 선택됩니다.

"""

import os

from dotenv import load_dotenv

from langchain_google_genai import ChatGoogleGenerativeAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

# 환경변수 로드

load_dotenv()

class Agent:

"""간단한 에이전트 클래스"""

def __init__(self, name, role, prompt_template, llm):

self.name = name

self.role = role

self.prompt = ChatPromptTemplate.from_template(prompt_template)

self.llm = llm

self.output_parser = StrOutputParser()

self.chain = self.prompt | self.llm | self.output_parser

def run(self, input_data):

"""에이전트 실행"""

print(f"\n🤖 {self.name} 에이전트가 작업 중...")

print(f"역할: {self.role}")

print("-" * 50)

try:

result = self.chain.invoke(input_data)

print(f"✅ {self.name} 완료!")

return result

except Exception as e:

print(f"❌ {self.name} 오류: {str(e)}")

return None

class MultiAgentSystem:

"""의도 분류 기반 멀티 에이전트 시스템"""

def __init__(self, llm):

self.llm = llm

self.agents = {}

self.setup_agents()

def setup_agents(self):

"""에이전트들을 설정"""

# 1. Intro 에이전트 - 의도 분류

intro_prompt = """

당신은 사용자의 의도를 분석하는 전문가입니다.

사용자의 입력을 분석하고 다음 중 하나로 분류해주세요:

분류 옵션:

- HELP: 도움이나 문제 해결이 필요한 경우 (질문, 가이드 요청, 튜토리얼, 문제 해결 등)

- SMALLTALK: 일상 대화나 잡담 (인사, 날씨, 감정 표현, 개인적 이야기 등)

사용자 입력: {user_input}

응답 형식:

분류: [HELP 또는 SMALLTALK]

이유: [분류한 이유를 한 줄로 설명]

분류만 명확하게 해주세요.

"""

# 2. Help 에이전트 - 도움 및 문제 해결

help_prompt = """

당신은 친절하고 지식이 풍부한 도우미입니다.

사용자의 질문이나 문제를 해결하는 데 도움을 주세요.

사용자 질문: {user_input}

다음과 같이 도움을 제공해주세요:

- 명확하고 구체적인 답변 제공

- 필요시 단계별 가이드 제공

- 추가 참고사항이나 팁 포함

- 이해하기 쉬운 설명 사용

전문적이면서도 친근하게 답변해주세요.

"""

# 3. Smalltalk 에이전트 - 일상 대화

smalltalk_prompt = """

당신은 친근하고 공감능력이 뛰어난 대화 상대입니다.

사용자와 자연스러운 일상 대화를 나누세요.

사용자 말: {user_input}

다음과 같이 대화해주세요:

- 따뜻하고 친근한 톤 사용

- 적절한 감정 표현과 공감

- 자연스러운 대화 흐름 유지

- 필요시 관련된 질문이나 주제 확장

편안하고 즐거운 대화를 만들어주세요.

"""

# 에이전트 생성

self.agents['intro'] = Agent(

"Intro",

"사용자 의도 분류",

intro_prompt,

self.llm

)

self.agents['help'] = Agent(

"Help",

"도움 및 문제 해결",

help_prompt,

self.llm

)

self.agents['smalltalk'] = Agent(

"Smalltalk",

"일상 대화 및 잡담",

smalltalk_prompt,

self.llm

)

def classify_intent(self, user_input):

"""사용자 의도 분류"""

print("� 사용자 의도를 분석 중...")

result = self.agents['intro'].run({"user_input": user_input})

if not result:

return "HELP" # 기본값

# 분류 결과 파싱

if "HELP" in result.upper():

return "HELP"

elif "SMALLTALK" in result.upper():

return "SMALLTALK"

else:

return "HELP" # 기본값

def run_conversation(self, user_input):

"""대화 시스템 실행"""

print("🚀 멀티 에이전트 대화 시스템 시작!")

print(f"사용자: {user_input}")

print("=" * 60)

# 1단계: 의도 분류

intent = self.classify_intent(user_input)

print(f"\n🎯 분류 결과: {intent}")

print("=" * 60)

# 2단계: 해당 에이전트 실행

if intent == "HELP":

response = self.agents['help'].run({"user_input": user_input})

else: # SMALLTALK

response = self.agents['smalltalk'].run({"user_input": user_input})

if response:

print(f"\n� 최종 응답:")

print(response)

print("\n" + "=" * 60)

return response

def main():

print("🔗 LangChain 의도 분류 기반 멀티 에이전트 시스템")

print("=" * 50)

# API 키 확인

api_key = os.getenv("GOOGLE_API_KEY")

if not api_key:

print("❌ GOOGLE_API_KEY가 설정되지 않았습니다.")

print("💡 .env 파일에 API 키를 설정해주세요.")

return

try:

# Gemini 모델 초기화

llm = ChatGoogleGenerativeAI(

model="gemini-2.0-flash",

temperature=0.7,

google_api_key=api_key

)

# 멀티 에이전트 시스템 생성

multi_agent = MultiAgentSystem(llm)

print("시스템이 준비되었습니다!")

print("\n💡 사용법:")

print("- 질문이나 도움이 필요하면 → Help 에이전트가 응답")

print("- 인사나 일상 대화를 하면 → Smalltalk 에이전트가 응답")

print("- 'quit' 또는 '종료'를 입력하면 종료")

print("\n" + "=" * 50)

# 대화형 루프

while True:

try:

user_input = input("\n👤 당신: ").strip()

if user_input.lower() in ['quit', 'exit', '종료', '끝']참조

https://docs.langchain.com/oss/python/langchain/overview

https://www.samsungsds.com/kr/insights/what-is-langchain.html